- | Academic & Student Programs Academic & Student Programs

- | Regulation Regulation

- | Policy Briefs Policy Briefs

- |

Regulating Real Problems: The First Principle of Regulatory Impact Analysis

For more than three decades, presidents have required executive branch regulatory agencies to identify the systemic problems they wish to solve when issuing major regulatory actions. The first principle in Executive Order 12866, which governs executive branch regulatory review, is that an agency shall “identify the problem that it intends to address (including, where applicable, the failures of private markets or public institutions that warrant new agency action) as well as assess the significance of that problem.” This principle reflects the sensible notion that before proposing regulation, regulators should understand the root cause of the problem the proposed regulation is supposed to solve.

For more than three decades, presidents have required executive branch regulatory agencies to identify the systemic problems they wish to solve when issuing major regulatory actions. The first principle in Executive Order 12866, which governs executive branch regulatory review, is that an agency shall “identify the problem that it intends to address (including, where applicable, the failures of private markets or public institutions that warrant new agency action) as well as assess the significance of that problem.” This principle reflects the sensible notion that before proposing regulation, regulators should understand the root cause of the problem the proposed regulation is supposed to solve.

Unfortunately, in practice regulatory agencies often decide what they want to do, write up the proposed regulations, and then hand the proposals to their economists. Often it is only at this late stage in the process that agencies identify the problems they are trying to solve. Congress should begin the regulatory reform process by requiring agencies to analyze problems and alternative solutions before they write regulations. This would put assessment of the problems where it belongs: before regulators choose solutions.

How Well Do Agencies Analyze Problems?

The Mercatus Center’s Regulatory Report Card—an in-depth evaluation of the quality of the regulatory analysis agencies conduct for major regulations—finds that agencies often fail to analyze the nature and significance of the problems they are responsible for solving.

The Report Card evaluates agencies’ economic analyses, known as regulatory impact analyses (RIAs), which have been required for major regulations since 1981. The purpose of an RIA is to identify problems, alternative solutions, and benefits and costs of these alternatives.

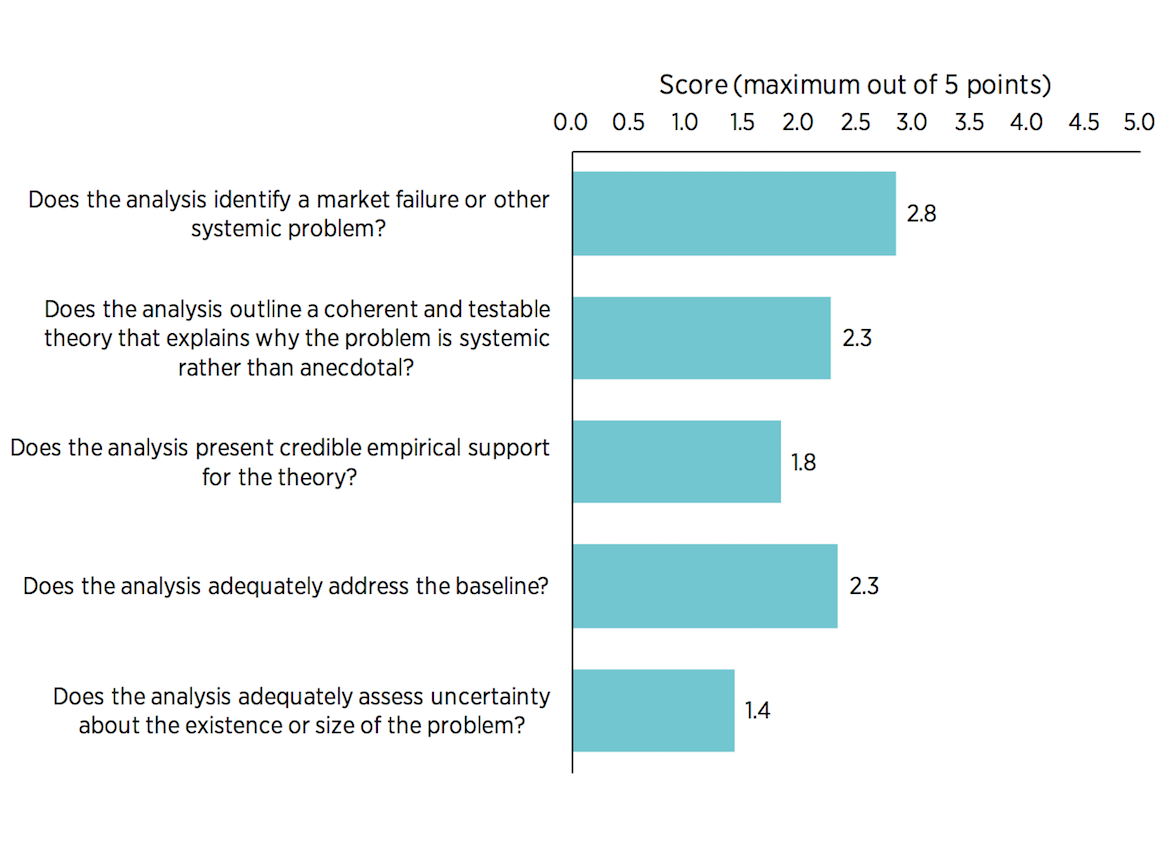

The Report Card includes five diagnostic questions that assess how well an agency has analyzed a systemic problem:

1) Does the agency identify a market failure or other systemic problem? Can the agency name a market failure, government failure, or other problem whose origins can be traced to incentives or institutions rather than the misbehavior of a few bad actors that could be dealt with on a case-by-case basis?

2) Does the analysis contain a coherent and testable theory explaining why the problem is systemic rather than anecdotal? Does the agency explain how the problem it is trying to fix stems from deficiencies in incentives or institutions that are likely to persist?

3) Does the analysis present credible empirical support for the theory? Does the agency have substantial evidence—not just anecdotes—showing that the theory is actually right?

4) Does the analysis adequately address the baseline? That is, does it address what the state of the world is likely to be going forward in the absence of the new regulation?

5) Does the analysis adequately address uncertainty about the existence or size of the problem? Does the agency consider whether it has accurately diagnosed the problem, measured its size, and appropriately qualified its claims?

Regulations receive a score ranging from 0 (no useful content) to 5 (comprehensive analysis with potential best practices). Figure 1 shows that the average scores for proposed, prescriptive regulations on each question range from 1.4 to 2.8 for the years 2008 to 2013. Prescriptive regulations are rules that impose mandates or restrictions of some kind on private citizens, business firms, and state, local, or tribal governments. The RIAs associated with these regulations often failed to identify systemic problems at all, or they merely offered a few assertions with little coherent theory of cause and effect or sound evidence.

The average overall score for identifying a systemic problem was 2.2 out of 5 points. This is under 50 percent—a failing grade by most standards. No regulation has ever received an overall score of 5 based on these five criteria. In fact, 37 out of the 130 regulations examined received a rating of 0 or 1 on this question. This means the regulatory analysis had little or no content assessing the systemic problem at all, in spite of a clear directive in Executive Order 12866. Instead of assessing systemic problems, many notices of proposed rulemaking or their accompanying RIAs merely cite the statute that gives the agency authority to issue the regulation. But citing a statute is far from defining and analyzing a problem.

Figure 1. Average Scores, 2008–2013

Source: “Regulatory Report Card,” Mercatus Center at George Mason University, https://www.mercatus.org/reportcards.

The Good, the Bad, and the Ugly: Examples of Problem Analysis in RIAs

The Good: Best Practices

In 2012, the US Department of Agriculture (USDA) proposed a regulation to modernize food inspections at poultry slaughter facilities as part of President Obama’s retrospective regulatory review initiative. The RIA for the proposed rule identified inefficiencies in the system of monitoring poultry for pathogens, and it highlighted how these inefficiencies created health risks for consumers. The old system was designed when visible animal diseases in poultry were more prevalent. As the marketplace evolved, this tended to focus inspector attention on physical defects that present minimal food safety risks. The old system also created unnecessary bottlenecks because it was most suitable for low-volume facilities, thereby reducing the incentive for facilities to improve processing methods, increase efficiency, and innovate in other ways.

The proposed rule sought to reduce health risks while enabling poultry processors to process birds more quickly. It also allowed facilities the option to operate under a revised version of the old standard or under a new inspection system. This flexibility was added to alleviate burdens on small businesses, which were largely expected to prefer the old system.

In the analysis, the agency explained why the problem was systemic in nature. The previous regulation was developed when product volumes were lower and when visibly detectable animal diseases were more prevalent. Moving inspectors to offline sampling and analysis activities could increase line speeds while also reducing illnesses. Inspectors would evaluate carcasses later in the process after the birds had been cleaned, allowing facilities the flexibility to find innovative approaches to catching contaminated poultry earlier in the process and making visible defects easier to catch at the inspection stage. Inspectors would refocus efforts toward offline verification activities. The analysis further emphasized how giving added flexibility to firms would encourage them to find innovative ways to improve sanitation at processing facilities, such as identifying unacceptable carcasses earlier in the production process.

But the USDA did not just theorize about a problem; it provided evidence. The analysis presented results from a pilot program, showing how increases in off-line sampling activities were followed by reductions in contamination rates and improvements in compliance with sanitation standards. The Food Safety and Inspection Service also produced a risk assessment in which the agency considered whether offline inspection activities might increase human illness from Salmonella and Campylobacter in chickens. The agency found the probability of increases in risk to be small, with a greater chance of illnesses falling in response to reallocating inspection activities.

To summarize, the USDA identified a problem—an outdated inspection system that missed food contamination and slowed production lines; offered a theory for why the system was not working as efficiently as was desirable—it was an obsolete system that relied on visual inspection to detect infection and was ill-suited for high-volume facilities; and proposed a solution that was flexible and informed by results from a risk assessment and the success of a trial program.

Despite adhering to these best practices, the analysis did have a few shortcomings. The RIA did little to address uncertainties about the size of the bottleneck problem. The analysis admitted that smaller processing plants might not experience these problems to the same degree as large plants, but it provided no estimate of the size of these effects and did not consider if this uncertainty would substantially change the expected results of the proposed regulation.

The Bad: Incomplete Practice

Many more RIAs go only partway toward identifying a concrete problem in need of a regulatory solution. Some RIAs theorize about a problem but provide little hard evidence about its scope or source. The FDA’s 2015 rule on manufacturing practices and preventive controls for animal food is one example.

The FDA proposed the regulation in 2013 and issued the final regulation in 2015. The RIA accompanying the regulation theorized that because consumers cannot always determine the source of contamination in animal food, neither the unregulated market nor the legal system will provide adequate incentives for production of safe animal food. It reasoned that unless the firm responsible for contamination faces a 100 percent probability that its culpability will be discovered, the firm will make investments in food safety that are below the socially optimal level. The analysis provided no evidence about the actual state of consumers’ or producers’ knowledge of animal food quality or contamination, no evidence about the effectiveness of the legal system or branding in promoting animal food safety, and no information about how much the safety of animal food falls short of the optimal level.

A comment on the proposed regulation submitted by Mercatus Center scholars suggested that the primary benefits of the regulation would stem from preventing contamination of pet food, not livestock feed. Confining the regulation to pet food would significantly reduce the cost. The FDA missed this distinction because it did not identify the root causes of the health problems the regulation was intended to prevent. The final RIA, which relied partly on methods and data sources from the Mercatus Center comment, estimated the regulation would generate $10.1 million to $138.8 million in benefits annually by protecting humans and pets from contaminated food. It presented no empirical evidence of benefits for livestock, relying instead on a survey of experts who offered their opinions on how effective the rule would be in preventing contamination of livestock feed. A survey of experts is better than no evidence at all, but it is a weak reed on which to rest a major mandate that would affect all firms that produce, pack, handle, or store livestock feed.

The Ugly: Worst Practices

Many rules fail to incorporate critical aspects of Executive Order 12886’s requirement to identify a systemic problem. For example:

A. When discussing the need for a regulation, many RIAs simply cite the law that authorizes the regulation. A 2010 Environmental Protection Agency RIA addressing sewage and sludge incineration units states that standards for the burning of sewage sludge should be set in order to stay in compliance with the Clean Air Act. The RIA mentions several pollutants that result from the incineration process but never connects this to a theory or evidence of externalities.

B. Another common shortcoming in analysis occurs when the agency asserts a problem but offers no evidence that the assertion is true. An example of such armchair theorizing comes from an Office of Personnel Management (OPM) regulation establishing multi-state health plans under the Patient Protection and Affordable Care Act. The OPM asserted that individuals searching for individual or small group health insurance had limited options, yet the agency provided no evidence to support such claims.

C. Sometimes agencies assert that a problem exists, but the assertion is contradicted by empirical evidence. The RIA accompanying a rule proposed by the USDA that required mandatory inspection of catfish products claimed to identify seven outbreaks and 66 Salmonella illnesses from consuming catfish during the years 1973 to 2007. However, a risk assessment accompanying the RIA noted that only one suspected Salmonella outbreak from catfish occurred in the 20 years prior to the analysis. Also problematic was that the USDA had no data on the concentration of Salmonella in catfish, so in its risk assessment it assumed results were the same as in poultry. Cooking catfish may be the primary reason for the relatively low number of outbreaks. Another explanation for the low risk is that catfish is already subject to FDA Hazard Analysis and Critical Control Point regulations, and many catfish processors voluntarily pay the National Marine Fisheries Service for seafood safety inspection and certification.

Conclusion

When analysts identify a real problem, measure its scope, and trace it back to a root cause, regulators have a better chance of accomplishing their goals. Executive Order 12886 was implemented precisely to ensure that this kind of rational decision-making occurs before regulations are enacted that force the public to expend real resources.

When analysts fail to identify and evaluate the problem they are trying to solve, regulators are more likely to respond to anecdotes rather than to widespread problems, and to address symptoms of problems rather than their root causes. This is akin to mopping up a wet floor every evening when the real problem is a leaky roof. To be effective at their jobs, regulators need to know what causes the problems they seek to solve.

To speak with a scholar or learn more on this topic, visit our contact page.