- | Healthcare Healthcare

- | Expert Commentary Expert Commentary

- |

A New HOAP

A Q&A with Dr. Robert Graboyes of the Healthcare Openness and Access Project

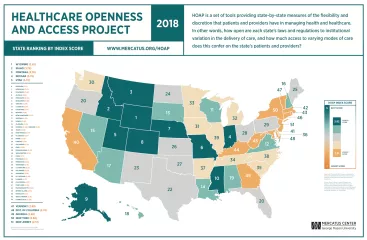

The Healthcare Openness and Access Project (HOAP), directed by Darcy N. Bryan, Jared Rhoads, and Robert Graboyes, presents state-by-state measures of the flexibility and discretion that patients and providers have in managing health and healthcare. HOAP was originally issued in December 2016. In June 2018, the numbers were revised to reflect changes in state laws in the interim and to reflect modest changes in the HOAP methodology.

Below, Robert Graboyes helps explain more about the project by answering a number of questions, ranging from why the project was launched, to how different audiences can make use of the findings.

You helped launch the Healthcare Openness and Access Project (HOAP) back in 2016. What about the healthcare policy world made you think it was worth doing?

For decades, “healthcare debate” in the United States has meant “insurance” and “federal.” Hence, we’ve argued over Medicare, Medicaid (a federal/state program), “Obamacare,” and single payer. But I’ve come to believe that these laws matter less than we’ve assumed, because the different visions for federal insurance law aren’t all that different from one another. And I’ve come to appreciate how profoundly important state laws, regulations, and medical governance are to the quantity and quality of healthcare we all receive. HOAP was a way to quantify the impact of those state data, to compare states with one another, and to spark conversations around the country about what really matters in healthcare.

Obviously a big focus of HOAP is the importance of state policy in healthcare. Was there a particular issue, regulation, or news story that first got you interested in or focused on the differences between state healthcare policies?

It was more about the multiplicity of state policies than about any single one of them. Certificate of need (CON) laws discourage the provision of services and raise prices. Scope-of-practice regulations limit the supply of healthcare services and funnel usage toward more expensive providers (e.g., doctors, instead of nurses, who may be equally or better qualified for the particular tasks at hand). Corporate practice of medicine doctrine, a chunk of early 20th century legal detritus, inhibits the ability of providers to take advantage of modern managerial concepts to improve quality and reduce costs. Occupational licensing and regulation laws decrease the supply of healthcare resources. States inhibit innovations like telemedicine and direct primary care. Malpractice laws, state insurance regulation, pharmaceutical regulations, tax laws … the list goes on. It’s a sort of death by a thousand cuts rather than one big thing.

You’ve just released an update to the project. What was the biggest change overall?

The changes were fairly modest. My co-authors—Darcy Bryan and Jared Rhoads—updated some of the data, and we made a few changes in the methodology. In some cases, the data changed because states changed their laws and regulations. In other cases, we changed the data because we gained access to better data than what we used in 2016. In a few other cases, we rethought our methodology and made changes accordingly. In some cases, an individual datum for a particular state changed substantially, and a few subindexes changed a lot. But as we expected beforehand, the overall state rankings didn’t change drastically. Florida jumped from 32 to 20 and Hawaii went from 29 to 18. The District of Columbia dropped from 40 to 48. But most states stayed pretty close to the 2016 rankings.

The index weighs a lot of factors in trying to sum up healthcare access. Taking off your scholar hat for a moment, which of these factors helps to motivate you and your research personally?

Again, it’s the multiplicity rather than one particular factor. We could be providing better care for more people at lower cost—year after year. Antiquated state laws and regulations stop us from doing so. And that does real harm to real people, particularly those at the lower end of the economic spectrum. If I had to pick one set of HOAP variables that fascinates me, it would be telemedicine. I personally have used telemedicine, and it helped rid me of a serious sinus infection 3 to 4 days earlier than would have been the case had I waited for an in-office appointment with my doctor. More than that, my then-92-year-old mother’s life was likely saved by telemedicine: she was having a video chat on her iPad with her grandson, a physician, who detected subtle clues that she was in the early stages of septic shock. One shouldn’t require a family member in the medical profession to access that sort of technological miracle.

Along those same lines, who is this index for? Is it for policymakers, everyday people, regulators, or someone else?

The primary audience is the policy community (policymakers, regulators, researchers). I’d like to think that the secondary audience is those in healthcare professions. The tertiary audience is everyone else.

You obviously have a lot of experience educating medical professionals. How do your experiences in the classroom shape how you think about healthcare access?

As someone who has taught medical professionals for the past two decades, I can tell you that while they tend to be brilliant and have sweeping knowledge of their subject areas, they can be quite provincial and surprisingly blind to developments in technology and the economics of healthcare delivery. These mid-career providers often have a vague sense that medical tourism, telemedicine, and intelligent home diagnostic devices exist, but they often have no idea of the extent of usage or the power of those developments. Attached to my cell phone is a small device that performs an electrocardiogram in 30 seconds, analyzes the results, and informs me instantly whether I seem to be experiencing potentially deadly atrial fibrillation. I regularly show this device to insurers, cardiologists, and medical school professors; often, they’ve never heard of the device, or they’ve heard about it but never seen it, or they were unaware of its power.

What do you hope (no pun intended) people do with this information after they read about their state?

More than anything, HOAP was designed to spark introspection and conversation across state lines and across professions. We want people in one state to see what other states are doing. We want them to pick up the phone and call colleagues in other states and say, “Your state seems to encourage telemedicine. I’ve always been skeptical of that technology. Tell me how that works.” We want lawmakers in one state to call lawmakers in other states and ask, “Your state lets nurse practitioners practice without supervision by a physician. Is that a good idea?” We want hospitals subject to certificate of need laws to be able to tell at a glance which states have no such laws, thereby spurring them to research the effects of dropping such laws. And we’d be very happy for researchers to say, “I don’t really agree with the methodology behind HOAP’s rankings, but the HOAP data are useful in constructing my own rankings.

What do you NOT want people to do with HOAP?

We don’t want people to assume that the HOAP rankings are capital-T Truth—the be-all and end-all in interstate comparisons of healthcare. We note from the start that our choice of data and methodology contains lots of subjective elements. Our data are by no means perfect, and we could have built the database in many other ways. We do not want HOAP to become empty talking points for bashing or praising particular states. The worst such example of that sort of misuse of data was the famous World Health Organization (WHO) rankings of national healthcare systems. Google “United States 37th healthcare” and you’ll get half a million hits. For two decades, people have used that factoid to trash the US healthcare system and extol the virtues of European-style systems. But in fact, WHO’s rankings were highly subjective, politically biased, and methodologically suspect. And yet, “US is 37th” has become a staple of academic papers and late-night comedians alike—and has done immeasurable damage to healthcare debates worldwide. (For what it’s worth, some of the WHO editors later renounced the rankings and how they were being used.) We tried hard not to repeat WHO’s mistakes, both in how to design such a study and in how to use it once it’s done. Modesty in claims is crucial to the process.