Instead of diagnosing the root cause of a problem before regulatory actions are proposed, agencies often decide what they want to do, write up the proposed regulations, and only then hand the proposals to their economists. This paper discusses the difficulties this process creates.

For more than three decades, the president has required executive branch agencies to identify the systemic problems they wish to solve when issuing major regulatory actions. In fact, the very first principle in Executive Order 12866, which governs executive branch regulatory review, is that an agency shall “identify the problem that it intends to address (including, where applicable, the failures of private markets or public institutions that warrant new agency action) as well as assess the significance of that problem.” This principle reflects the commonsense notion that before making a decision, decision makers should understand the root cause of the problem the regulation is supposed to solve.

Unfortunately, in practice regulatory agencies often decide what they want to do, write up the proposed regulations, and only then hand the proposals to their economists. Only at this late stage in the process do agencies identify the problems they are trying to solve. Congress should begin the regulatory reform process by requiring agencies to analyze the problems and alternative solutions before they decide to write regulations. This would put assessment of the problems where they belong: before decisions get made about the solutions.

HOW WELL DO AGENCIES DEFINE THE PROBLEMS?

The Mercatus Center’s Regulatory Report Card—an indepth evaluation of the quality of regulatory analysis agencies conduct for major regulations—finds that agencies often fail to analyze the nature and significance of the problems they’re (supposed to be) trying to solve. The Report Card evaluates agencies’ economic analyses, known as Regulatory Impact Analyses (RIAs), which have been required for all major regulations since the early 1980s. The purpose of these RIAs is to identify the problems, alternative solutions, and the costs and benefits of these alternatives.

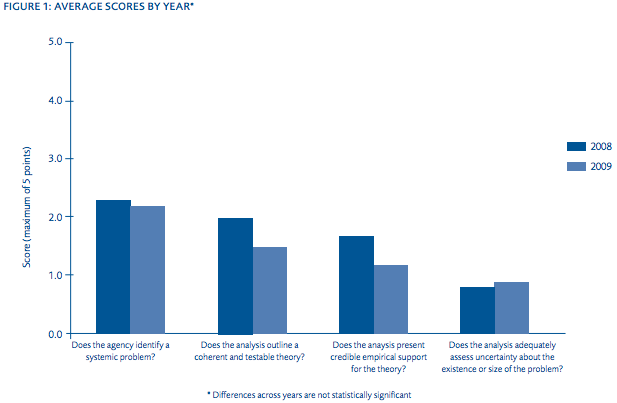

The Report Card includes four diagnostic questions that assess how well an agency analyzed a systemic problem:

- Does the agency identify a market failure or other systemic problem? In other words, can the agency at least name a market failure, government failure, or other problem whose origins can be traced to incentives or institutions rather than the misbehavior of a few bad actors that could be dealt with on a case-by-case basis?

- Does the analysis contain a coherent and testable theory explaining why the problem is systemic rather than anecdotal? In other words, does the agency explain how the problem it’s trying to fix stems from deficiencies in incentives or institutions that are likely to persist?

- Does the analysis present credible empirical support for the theory? In other words, does the agency have substantial evidence—not just anecdotes— showing that the theory is actually right?

- Does the analysis adequately address uncertainty about the existence or size of the problem? In other words, does the agency consider whether it has accurately diagnosed the problem, measured its size, and appropriately qualified its claims?

Regulations receive a score ranging from 0 (no useful content) to 5 (comprehensive analysis with potential best practices). Figure 1 shows that the average scores range between 0 and 2, with essentially no difference between the Bush and Obama administrations. The RIAs associated with these regulations often failed to identify systemic problems at all, or they merely offered a few assertions with little coherent theory of cause and effect or evidence.

Just one regulation scored a 5 for identifying a systemic problem in the two years—HUD’s proposed mortgage disclosure regulation. Ten out of 45 regulations scored a 0 for identifying a systemic problem in 2008, and 7 out of 42 regulations scored a 0 in 2009. This means the regulatory analysis had little or no content assessing the systemic problem at all, in spite of the clear directive in Executive Order 12866. Instead of assessing the systemic problems, many proposed regulations or their accompanying RIAs merely cite the statute that gives the agency authority to issue the regulation. But citing a statute is not the same thing as defining and analyzing a problem.

BEST AND WORST PRACTICES IN ANALYSIS

Does the agency identify a market failure or other systemic problem?

Best practice: Identify a clear market failure or government failure. A rule that scored well on this criterion was the yearly migratory bird hunting rule that the Department of Interior issues to set hunting seasons and bag limits. The rule’s RIA identifies a clear public goods problem: because no property rights are assigned in migratory birds, each hunter has an incentive to overhunt. Overhunting will occur and deplete birds. The analysis refers to this as an externality in which one hunter imposes costs on another. An international treaty responds to this problem by banning hunting unless governments impose limits. Therefore, the rule is necessary to allow hunting while preventing overhunting

Worst practice: Simply cite the law that authorizes the regulation. Examples of this practice include the 2008 HHS Medicaid program premiums and cost sharing rule, which states that changes to a prior rule occurred because legislation directed HHS to do so. Another example is a 2009 EPA rule implementing changes to EPA’s renewable fuels program. The notice of proposed rulemaking simply states that the Energy Independence and Security Act requires the regulation to implement changes to the program. While a law may authorize or require an agency to implement a rule, even if an agency has little discretion, it should at least identify and evaluate evidence of the problem that Congress thought the regulation would solve.

Does the analysis contain a coherent and testable theory explaining why the problem is systemic rather than anecdotal?

Best practice: Identify a problem and offer a theory to explain how the problem came to exist. The 2008 Housing and Urban Development (HUD) rule revising home mortgage disclosures identified failures of both market and government institutions and offered a theory to explain these failures. HUD’s analysis suggested that the complexity of real estate transactions and some borrowers’ lack of information allowed mortgage providers to collect higher fees from less informed or less sophisticated borrowers. “Information asymmetry” is a classic market failure, and information disclosure can be a sensible remedy. But current disclosures actually exacerbated the problem by confusing borrowers, so HUD proposed to revise the disclosures.

Worst practice: Identify a potential problem, but fail to explain why the problem is systemic in nature and why voluntary decisions will not bring about a solution. In the analysis for the Department of Transportation’s (DOT) 2009 “ejection mitigation” standards for automobile side windows, data on injuries and deaths from side-window ejections suggest a problem exists. But the analysis does not trace the problem to its root cause by explaining why car buyers and automakers under-invest in safety. One might expect that consumers would be willing to pay for improvements in safety that are actually effective, and thus automakers would be willing to supply the improvements. In fact, DOT describes how some car companies already took steps voluntarily to make their vehicles safer, such as improved glazing that makes windows stronger and rollover sensors. This suggests that private markets may already be moving toward a solution to the problem. Since the analysis fails to identify a root cause of the safety problem, it is impossible to tell from DOT’s analysis whether this rule is necessary or how much of the problem the rule might eliminate.

Does the analysis present credible empirical support for the theory?

Best practice: Provide data-driven, empirical evidence that shows the problem exists and the agency’s hypothesis about the root cause is true. The 2009 EPA rule controlling emissions from marine compression-ignition engines seeks to reduce pollution from ships traveling in U.S. oceans and lakes. Studies show these emissions have a significant impact on ambient air quality far inland. Additionally, further studies suggest certain types of emissions are shown to be associated with serious public health problems, such as premature mortality and chronic bronchitis. These added costs to society are not reflected in the costs of providing the transportation services, which suggests the emissions are a classic externality.

Worst practice: Propose a theory, but provide no evidence to support this theory. In the 2008 federal acquisition regulation requiring government contractors to use the E-Verify system to determine employees’ immigration status, some theories are presented: firms might be tempted to hire less costly illegal workers in a tight labor market and the likelihood of a workforce enforcement action may not be high enough to justify the effort required to use E-Verify. But no empirical evidence is presented to support such theories, so the reader cannot tell if requiring E-Verify is necessary or the most effective solution.

Does the analysis adequately address uncertainty about the existence or size of the problem?

Best practice: Perform a “sensitivity analysis” to assess likely uncertainties about the existence or size of the problem. In the 2008 DOT rule on railroad tank car transportation of hazardous materials, the RIA examines uncertainty surrounding crash severity, which has a large effect on the size of the problem the regulation seeks to solve. It performs a sensitivity analysis to assess the effects of uncertainty about the consequences of releasing hazardous materials resulting from train accidents. DOT concluded that construction standards and speed restrictions would create substantial benefits regardless of this uncertainty.

Worst practice: No discussion at all. Tellingly, 29 regulations in 2008 and 26 in 2009 failed to discuss uncertainty about the existence or size of the problems or explain why there is no uncertainty. These regulations scored a 0 on this question, making it one of the most commonly overlooked areas in regulatory analysis. The typical rule either made no reference to uncertainty about the problem at all or acknowledged some uncertainty but did not elaborate at all on the degree of uncertainty. Cases where agencies attempted to measure uncertainty about the problem were the exception rather than the rule.

CONCLUSION

Congress should require agencies to rigorously analyze the nature and significance of the systemic problems they seek to solve and request comment on that analysis before making decisions. All underlying research and data should be published as well, so that interested members of the public can understand, replicate, and critique the agencies’ analyses. Identifying the systemic problems rules seek to solve is the first step towards a regulatory process that offers effective solutions to real problems.